Let me start my commentary on Hossenfelder’s material by confessing that I sympathize with her work and tend to like her incisive, provocative style. Her voice opposes and slows down the descent of physics into fantasy informed by notions of beauty, as opposed to predictive models informed by hardnosed empirical observations. As someone disappointed with the failure of Super Symmetry, which inspired my younger years at CERN, I realize how important it is to keep our eyes on the proverbial empirical ball. However, despite Hossenfelder’s zeal to stay true to empiricism, she may have now failed it for the sake of safeguarding a physicalist metaphysical commitment.

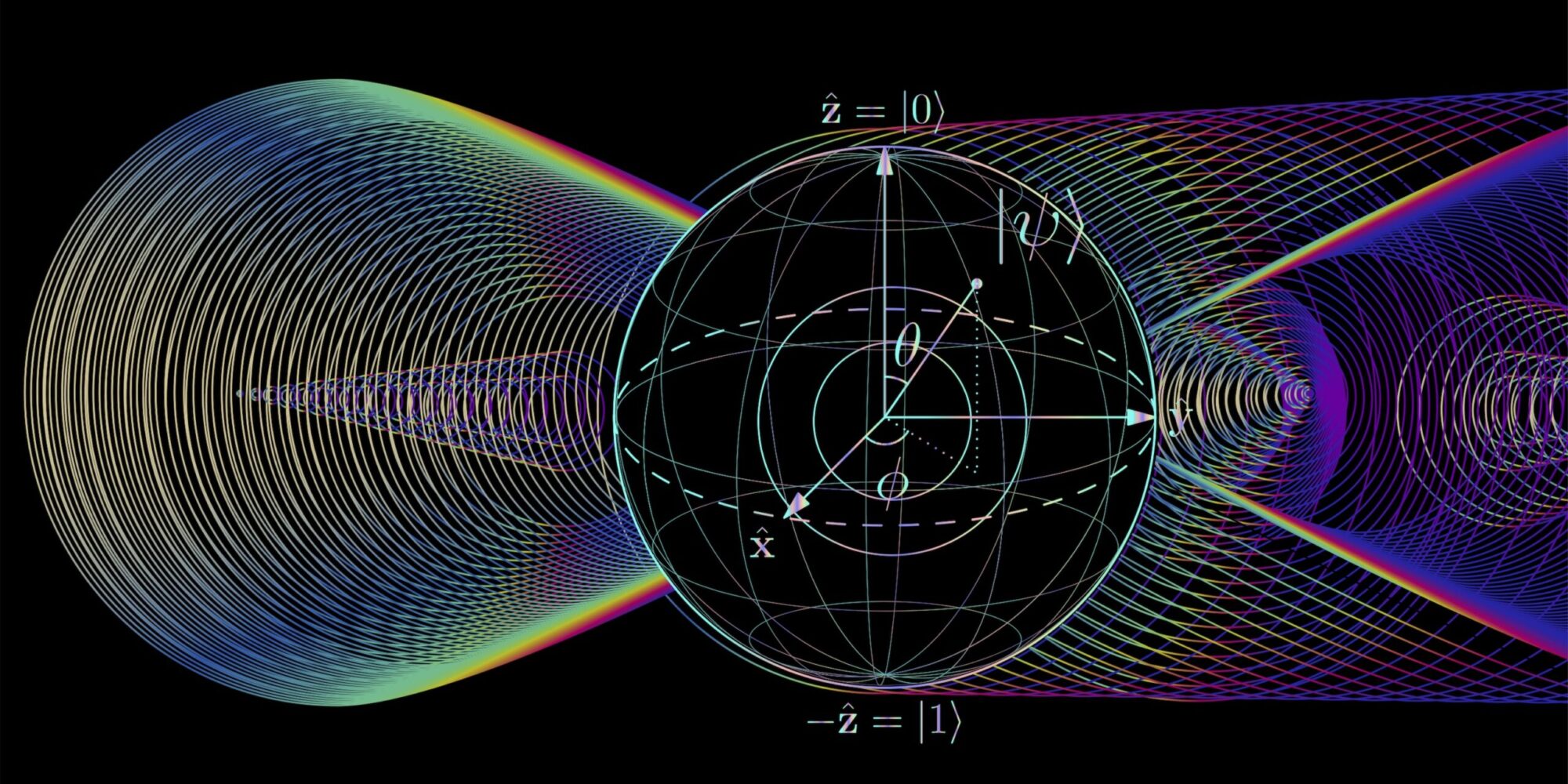

Hossenfelder’s view is that the experimental results in question can be accounted for by ‘superdeterminism.’ The idea is that the particles must have some hidden properties that we know nothing about. These hidden properties presumably take part in a complex causal chain that encompasses the settings of the detectors used to make measurements. In other words, the detectors’ settings somehow influence something hidden about the particles measured. And since the choice of what to measure is necessarily reflected on those settings, the measurement results depend on that choice. She summarizes superdeterminism thus: “What a quantum particle does depends on what measurement will take place.”

If one posits that quantum particles are nature’s fundamental building blocks, then Hossenfelder’s summary above is strictly incorrect: we define quantum particles in terms of their properties, and their properties depend on what measurement will take place. Therefore, the accurate formulation would be that “what a quantum particle [is] depends on what measurement will take place,” not what it does. Formulated this way—i.e. correctly—the statement starts to look a little less intuitive than Hossenfelder makes it out to be. After all, how can what a particle is depend on what is measured about it? Shouldn’t measurement simply reveal what the particle already was, just as it does in the case of a table? How can the particle change what it is merely because the detector is set up in a different way?

But let us be charitable towards Hossenfelder. According to Quantum Field Theory, there are no such things as particles; the latter are merely metaphors for particular patterns of excitation of an underlying quantum field. And one can reasonably state that the quantum field does those excitations, for excitations are behaviors of the field. Therefore, “what the quantum [field] does depends on what measurement will take place.” That’s accurate enough and seems, at first, to restore plausibility to Hossenfelder’s argument.

The problem is that she is asking us to imagine the existence of things for which we have no direct empirical evidence; after all, they are “hidden.” She is also asking us to grant these invisible things some very specific and non-trivial capabilities: they must somehow—neither Hossenfelder nor anyone else has ever specified how—change, in some very particular ways, in response to the settings of the detector. Mind you, detectors are designed precisely to minimize disturbances to the state of what is measured. Hossenfelder’s imagined hidden variables would have to somehow overcome this barrier as well.

Let us use a metaphor to illustrate what we are being asked to believe. When I take a picture of some celestial body in the night sky—say, the moon—I can set my camera in a variety of different ways. I can, for instance, set aperture and exposure time to a variety of different values. What Hossenfelder is saying, in the context of this metaphor, is that there is some hidden and mysterious something about the moon that changes in response to what aperture or exposure I set on my camera. What the moon does up there in the sky somehow—we’re not told how—depends on how I set my camera here on the ground. This is superdeterminism in a nutshell and you be the judge of its plausibility.

Be that as it may, appealing to hidden variables is inevitably an appeal to a vague, undefined unknown; and not just any vague unknown, but one capable of non-trivial interactions with its environment. The same criticism Hossenfelder leverages against, for instance, superstring theory can be leveraged, verbatim, against hidden variables: we’re appealing to imaginary entities for which there is no direct empirical evidence. To put it in plain English, we have no reason to believe this fantastic invisible stuff, except for trying to save physicalism.

Hossenfelder could argue that the peculiarities of quantum mechanical measurements—the very problem at hand—are themselves evidence of hidden variables. But this, obviously, would beg the question entirely: quantum measurements can only be construed as evidence of hidden variables if one presupposes that hidden variables are responsible for them to begin with. In precisely the same way, only if I presuppose the existence of the—equally hidden—Flying Spaghetti Monster, who moves the celestial bodies around their orbits using His invisible noodly appendages, can the movements of the celestial bodies be construed as evidence of the existence of the Flying Spaghetti Monster.

In one of her papers [2], Hossenfelder speculates about a possible type of experiment that could one day substantiate superdeterminism. The proposal is to make multiple series of measurements on a quantum system, each based on the same initial conditions. If the series are determined by the system’s initial conditions, as superdeterminism postulates, we should see time-correlations across the different series that deviate from quantum mechanical predictions. The obvious problem, however, is that to reproduce the system’s initial state one needs to reproduce the initial values of the postulated hidden variables as well. But Hossenfelder has no idea what the hidden variables are, so she can’t control for their initial states and the whole exercise is pointless. To her credit, she admits as much in her paper. She then proceeds to speculate about some scenarios under which we could, perhaps, still derive some kind of indication from the experiment, even without being able to control its conditions. But the idea is so loose, vague and imprecise as to be useless.

Indeed, Hossenfelder’s proposed experiment has a critical and fairly obvious flaw: it cannot falsify superdeterminism. Therefore, it’s not a valid experiment, for we’ve known since Popper in the 1960s that valid experiments motivated by a hypothesis are supposed to be able to falsify the hypothesis. More specifically, if Hossenfelder’s experiment shows little time-correlation between the distinct series of measurements, she can always (a) say that the series were not carried out in sufficiently rapid succession, so the initial state drifted; or (b) say that there aren’t enough samples in each measurement series to find the correlations. The problem is that (a) and (b) are mutually contradictory: a long series implies that the next series will happen later, while series in rapid succession imply fewer samples per series. So the experiment is, by construction, incapable of falsifying hidden variables.

In conclusion, no, hidden variables have no empirical substantiation, neither in practice nor in principle; neither directly nor indirectly.

You see, I would like to say that hidden variables are just imaginary theoretical entities meant to rescue physicalist assumptions from the relentless clutches of experimental results. But even that would be saying too much; for proper imaginary entities entailed by proper scientific theories are explicitly and coherently defined. For instance, we knew what the Higgs boson should look like before we succeeded in measuring its footprints; we knew what to look for, and thus we found it. But hidden variables aren’t defined in terms of what they are supposed to be; instead, they are defined merely in terms of what they need to do in order for physical properties to have standalone existence. If I were tasked with looking for hidden variables—just as I once was tasked with looking for the Higgs boson—I wouldn’t know even how to begin, because we are not told by Hossenfelder what they are supposed to be. She is just furiously waving her hands and saying, “there has to be something (I have no clue what) that somehow (I have no clue how) does what I need it to do so I can continue to believe in a physicalist metaphysics.”

This is akin to the medieval notion of ‘effluvium,’ an imaginary elastic material that supposedly connected—invisibly—chaff to amber rods. Effluvium was meant to account for what we today understand to be electrostatic attraction, a field phenomenon. Medieval scholars observed that chaff somehow clung to amber rods when the latter were rubbed. Therefore—since they had no notion of fields—they figured that there had to be some material connecting chaff to rod through direct contact, right? After all, everything that happens in nature happens through direct material contact, right? Never mind that such material was invisible (hidden!), couldn’t be felt with the fingers, couldn’t be cut or measured directly, and that no one had the faintest idea what it was supposed to be, beyond defining it in terms of what it allegedly did to chaff; it just had to be there.

Hidden variables are Hossenfelder’s effluvium: there must be some mysterious invisible something that somehow does what needs to be done for us to think of physical entities as having standalone existence, right? Because measurable physical entities are all that exists and, as such, must have standalone existence… right?

On a more technical note, Hossenfelder bases her entire discussion on Bell’s inequalities but conspicuously fails to mention Leggett’s inequalities [4], a 21st-century extension of Bell’s work, more relevant to the points in contention, which separates the hypotheses of physical realism and locality so they can be tested independently. Neither does she address the experimental work done to verify Leggett’s inequalities, which later refuted physical realism rather specifically [5, 6]. Even more recent experiments have also demonstrated that physical quantities aren’t absolute, but contextual (i.e. relative, or ‘relational’) [7, 8], thereby contradicting superdeterminism. By now, a broad class of hidden variables has been refuted by experiments [9], which Hossenfelder doesn’t comment on at all.

Instead, she bases her case on the notion that the opponents of hidden variables dislike the latter simply because the hypothesis supposedly contradicts free will. While I am sure that there are physicists emotionally committed to free will, refuting their emotional commitments does not validate superdeterminism. Suggesting that it does is a straw man. I, for one, am on record stating that free will is a red herring [10] and don’t base my case against superdeterminism on it at all. I don’t need to.

We have to guard against turning the particular metaphysical assumptions of our time into an unfalsifiable system; one that accommodates anomalies through arbitrary, fantastical appeals to unknowns and vague, promissory hand waving. When the anomalies that contradicted Ptolemaic and Copernican astronomy—according to which the celestial bodies move in perfectly circular orbits—began to be observed, adherents came up with fantastical ‘epicycles’—circles moving on other circles—to accommodate the anomalies. The tortuous cumbersomeness of the resulting models should have been enough to force them to take a step back and contemplate their dilemma in a more intellectually honest manner. But subjective attachment to a particular set of metaphysical assumptions didn’t allow them to do so.

Today, as anomalies accumulate in various branches of science against the metaphysical assumptions of physicalism, we would do well to avoid a humiliating repetition of the epicycles affair. However, instead, what we are now witnessing are hypotheses being put forward, with a straight face, that fly in the face of any honest notion of reason and plausibility. The epicycles look benign and reasonable in comparison to the willingness of some 21st-century theoreticians to believe in fantasy. I shall comment more broadly on this peculiar phenomenon—a harbinger of paradigm changes—in my next essay for this magazine.

References

[1] http://web.stanford.edu/~alinde/SpirQuest.doc

[2] https://arxiv.org/pdf/1912.06462.pdf

[3] https://arxiv.org/abs/2010.01324

[4] https://link.springer.com/article/10.1023/A:1026096313729

[5] https://www.nature.com/articles/nature05677

[6] https://iopscience.iop.org/article/10.1088/1367-2630/12/12/123007/meta

[7] https://arxiv.org/abs/1902.05080

[8] https://www.nature.com/articles/s41567-020-0990-x

[9] https://physicsworld.com/a/quantum-physics-says-goodbye-to-reality/

[10] https://blogs.scientificamerican.com/observations/yes-free-will-exists/